Survey Findings: The State of AI Implementation and Program Management in Financial Services & Insurance

Survey Findings: The State of AI Implementation and Program Management in Financial Services & Insurance

Blog

Featured

Latest

Resources

·

Rehgan Bleile

Financial services enterprises are accelerating their forays into AI. Budgets to do so are up, ambitions are high, and the pressure to show ROI has never been greater. Yet despite years of investment and experience, AI initiatives still aren’t reaching production or seeing value fast enough.

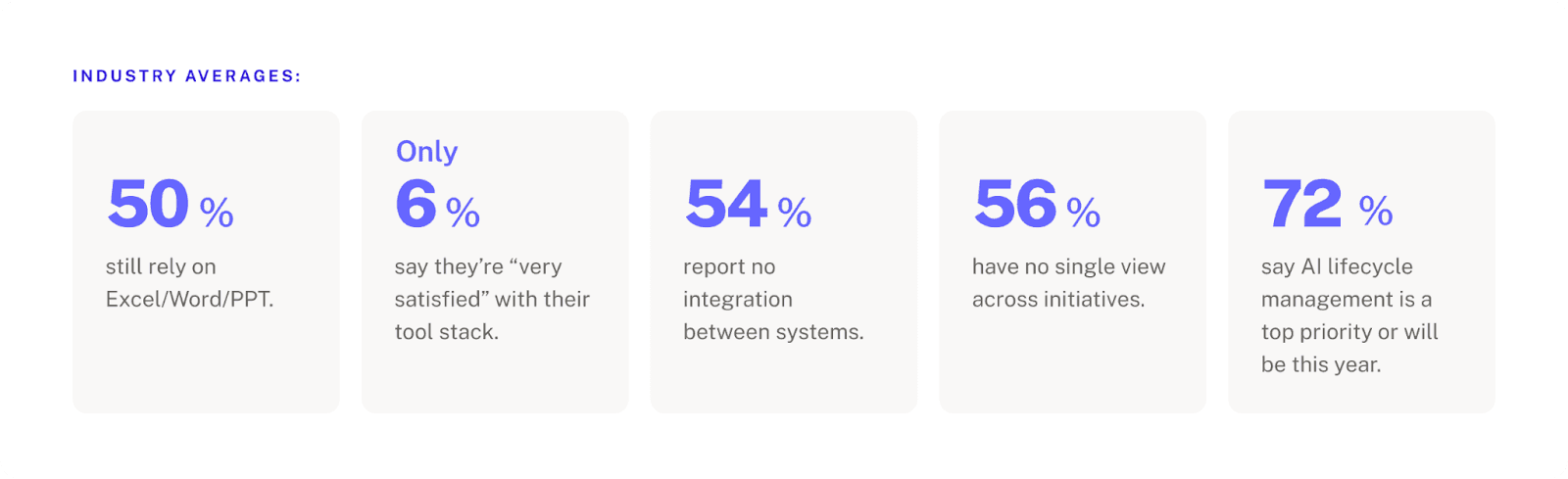

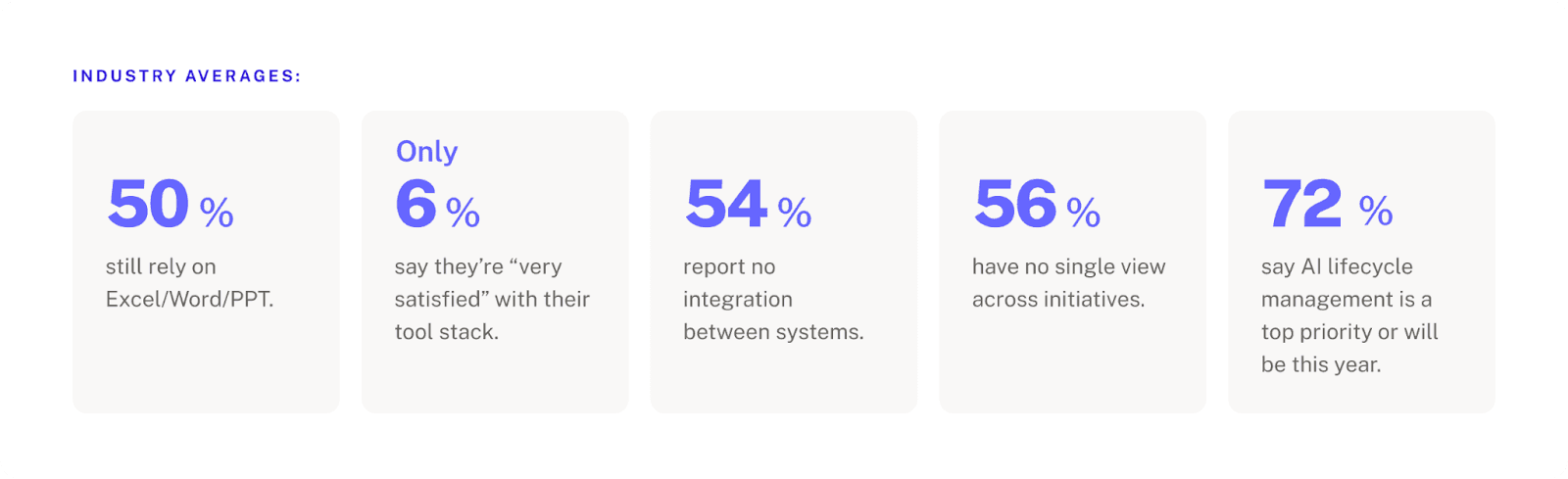

NVIDIA's 2026 State of AI report shows 86% of financial services organizations plan to increase their AI budgets this year. Deloitte's latest research puts 54% of organizations in all industries on track to double AI projects in production in the next six months. Our own survey data also reinforces that even 98% agree on what the ideal AI lifecycle management solution should look like.

So why are these same organizations still running their AI programs through Excel and not hitting their AI production timelines and goals?

These are the questions we set out to answer. That’s why we partnered with the Gerson Lehrman Group (GLG) to survey senior AI leaders across the insurance and banking and financial service industries. These are the people designing, coordinating, and governing enterprise AI programs.

The data revealed a clear operations gap. The biggest barriers to deploying AI stem from cross-functional coordination issues, no end-to-end ownership, and tool fragmentation across tools not built for the AI lifecycle. Here’s what stood out:

Below, we break down key findings from the survey, and what they mean for AI program leads trying to navigate them.

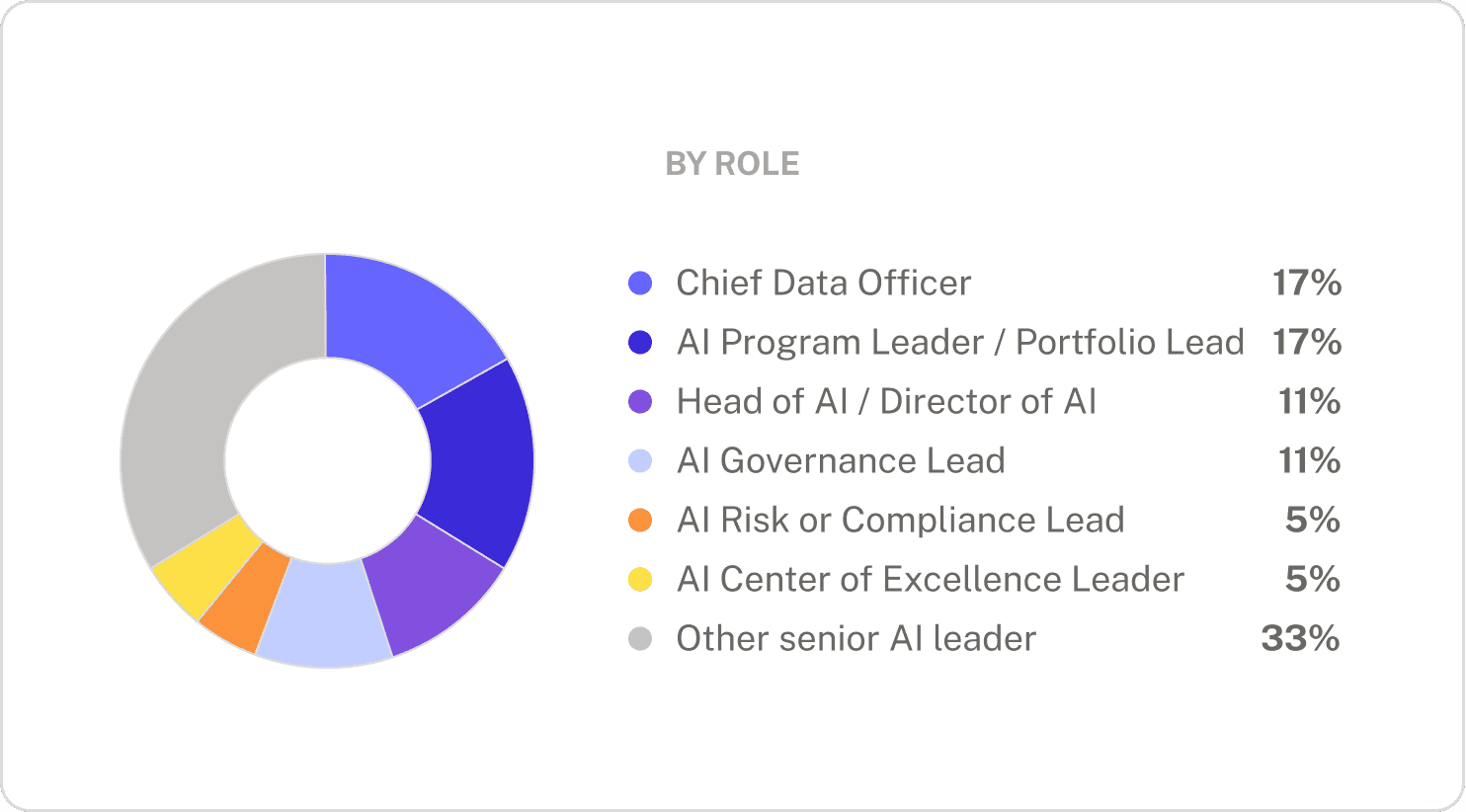

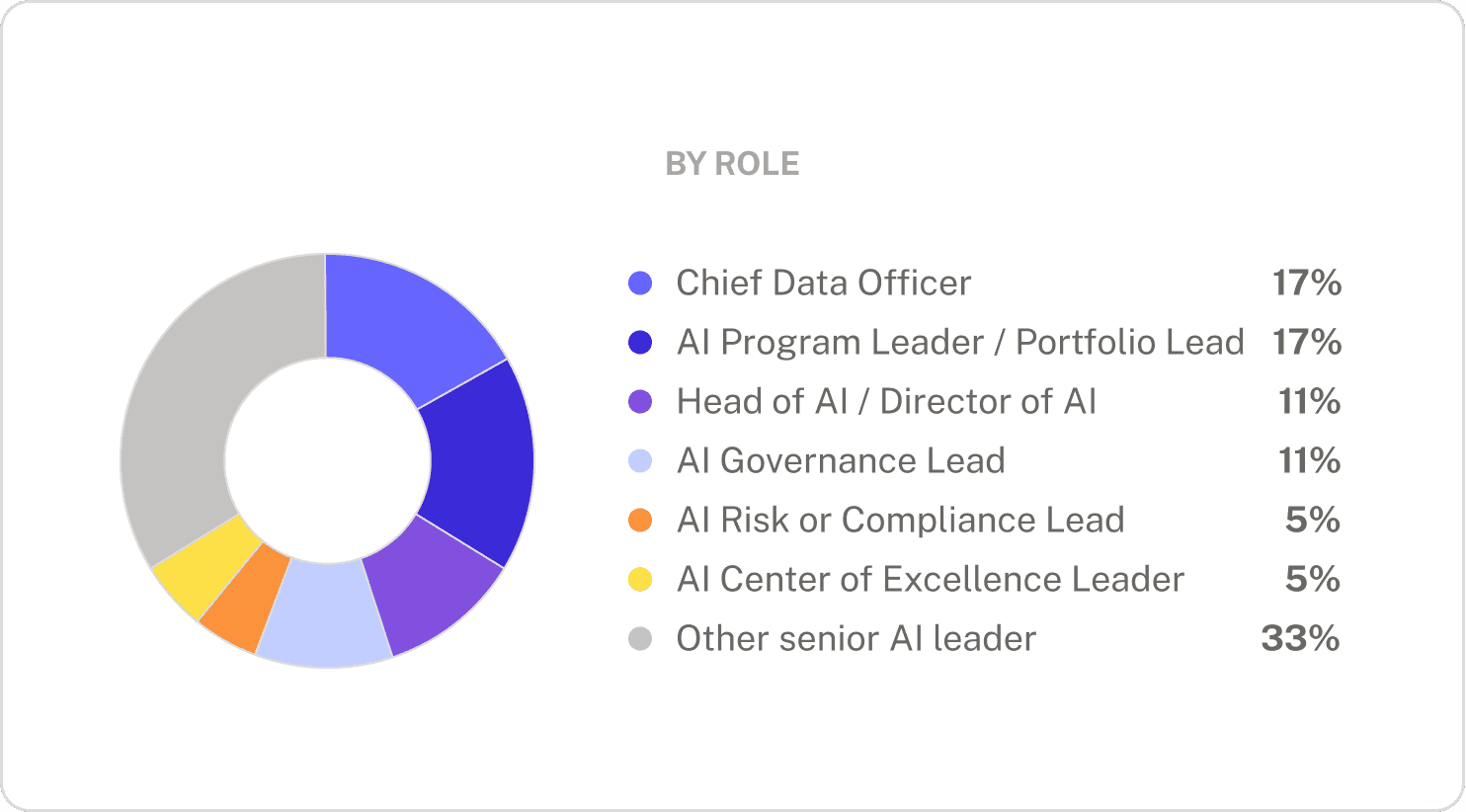

Who we surveyed

*”Other senior AI leader” roles include: VP in Pilot Programs, SVP of Research & Strategy, Board Member, Principal Architect-AI and Data, IT Examination Manager, AI Development for Claims

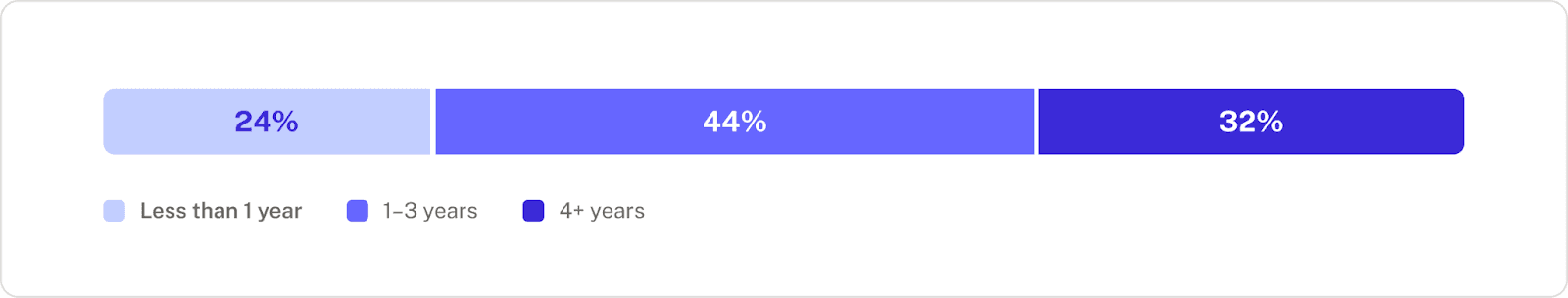

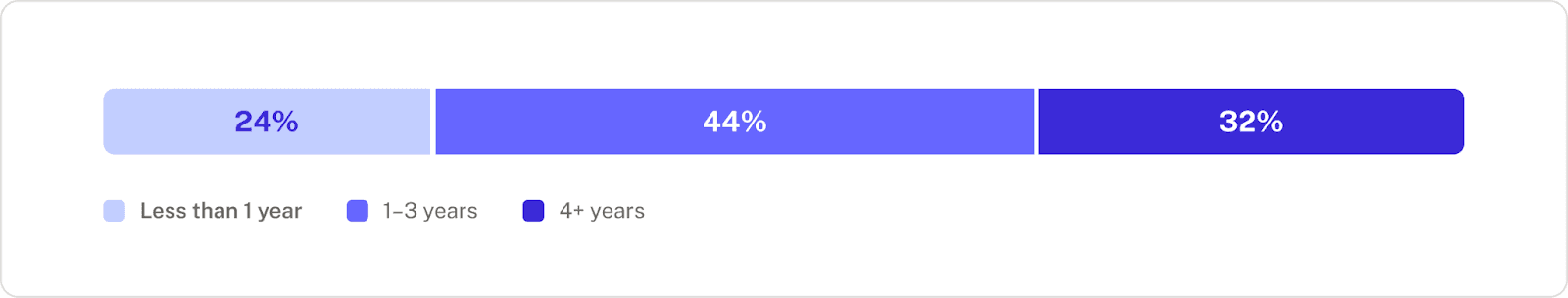

Years of experience implementing AI programs

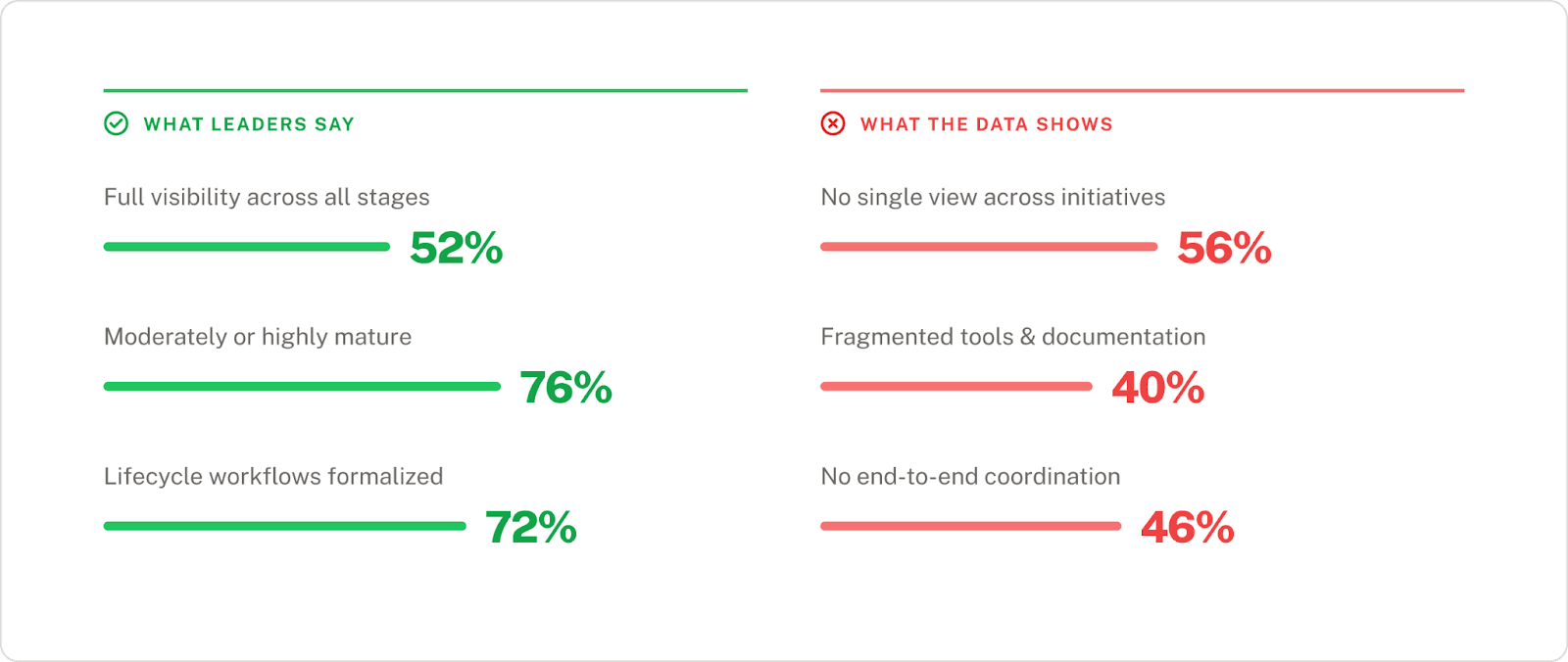

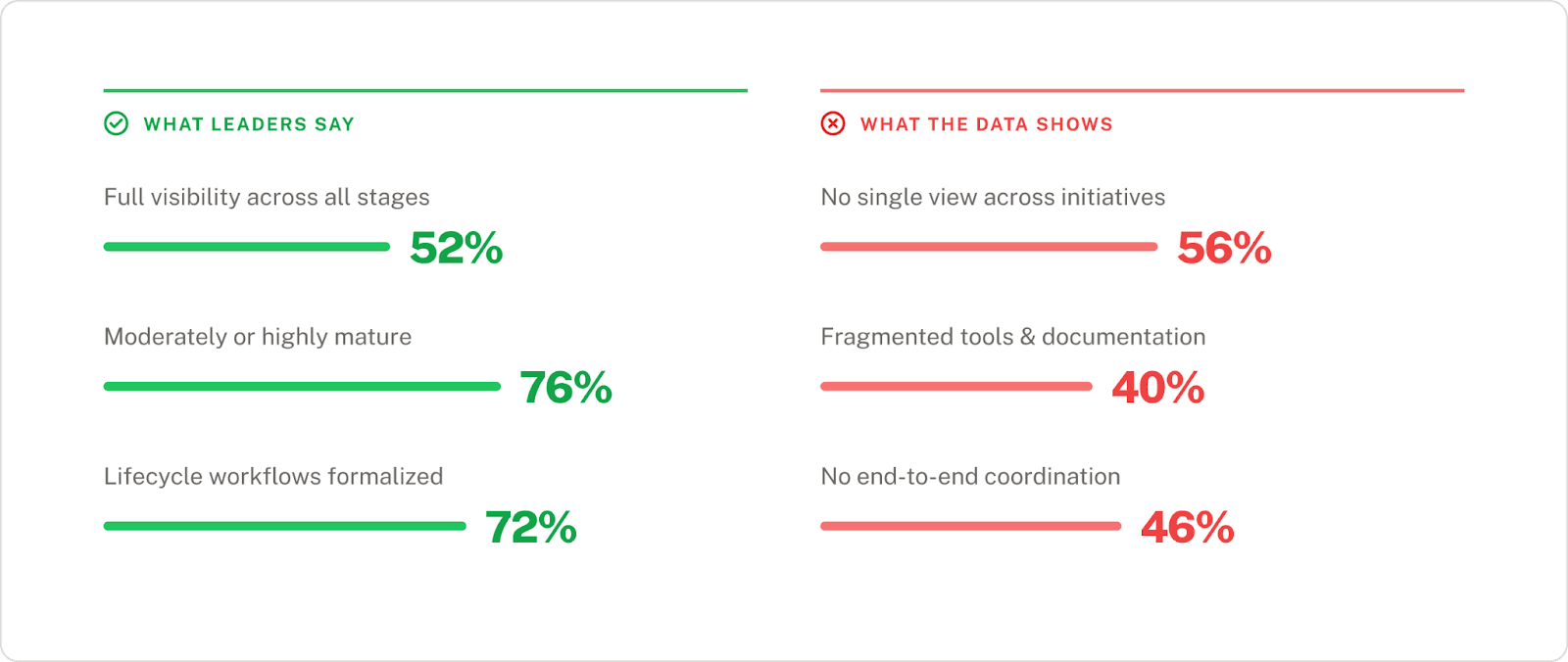

Finding 1: 52% of leaders say they have full lifecycle visibility, yet 56% say they lack a single view across initiatives

On the surface, the survey results look encouraging. More than half of respondents (52%) say they have "full visibility" across all stages of the AI lifecycle, and 76% rate their operations as moderately or highly mature. Most have formalized at least some lifecycle workflows, with 72% having a structured intake and backlog process, and over half have formalized prioritization, risk evaluations, and design-phase workflows.

But when we asked about specific operational capabilities, the picture shifted.

What respondents reported about their tooling and coordination gaps

These aren't different groups. These are the same people reporting that they have both full visibility and maturity, but are struggling with the ground-level coordination and integration for these same systems.

“Visibility” for leaders might be predicated on more invisible manual forces

The problem might lie in “visibility” working differently between executives asking for updates, versus the AI program managers trying to pull them together. For many, this means pulling data and details from Jira, Excel spreadsheets, SharePoint docs, and more. At AlignAI, we tend to see AI programs move through a fragmented process, with no connected system that sits on top of it all. So what a leader may see as a mature operation is actually based on someone else cobbling it all together.

This can be problematic if those with the authority to fix the problem are precisely not the ones experiencing it. Eventually, manual reporting won’t be able to explain what’s stalled and why, where a compliance gap is, or what an AI program’s true value is.

This pattern holds across industries. Both banking and insurance respondents exhibited this perception gap. Insurance respondents were actually more explicit about the operational reality, naming end-to-end coordination gaps and post-production monitoring needs unprompted, even for smaller organizations ($1B–$5B).

Visibility must be systemic, not manually produced

The question isn't whether your organization has visibility. It's whether that visibility is systemic —real-time, traceable, and connected across every stage of the lifecycle—or whether it's a person chasing information on behalf of someone who asked.

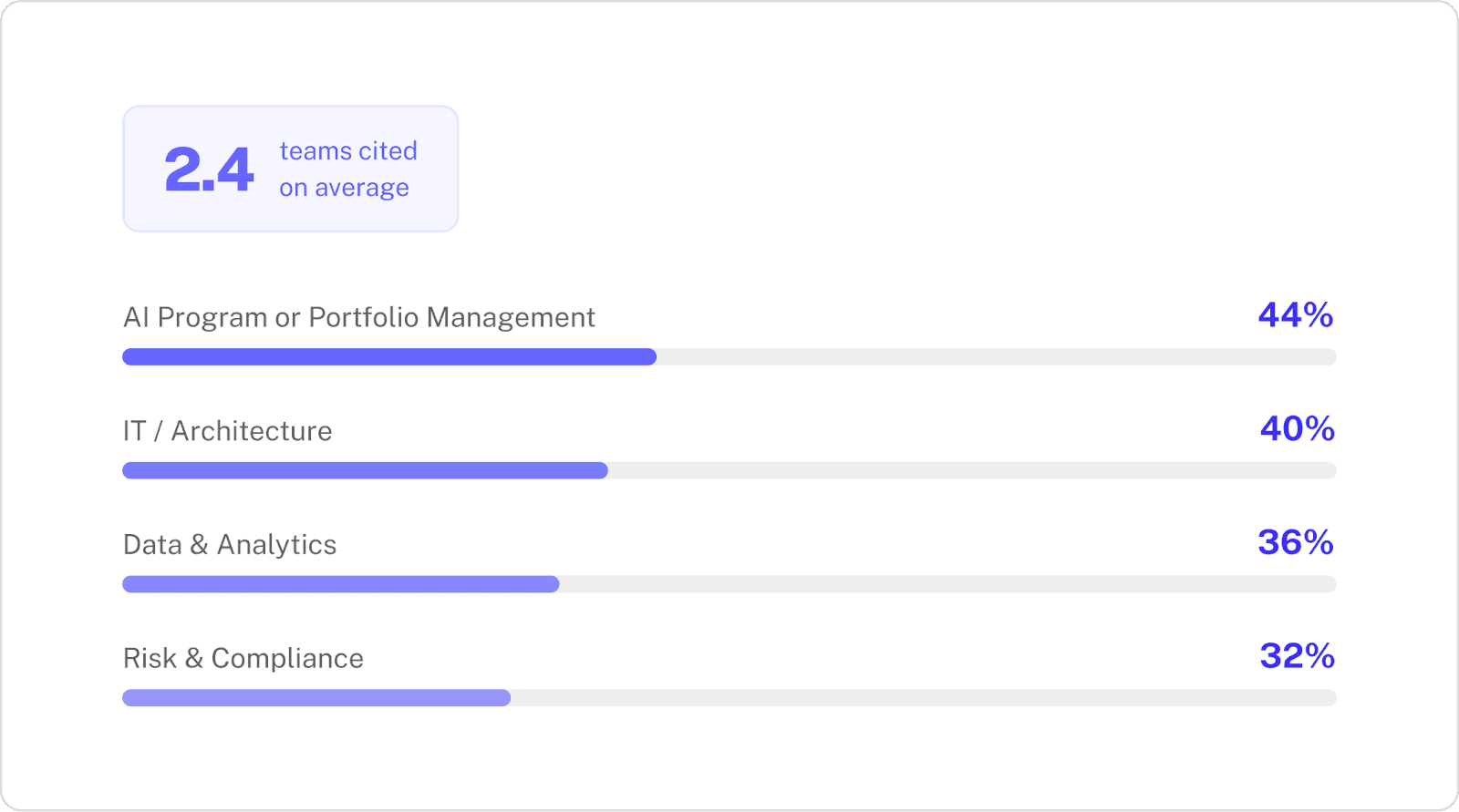

Finding 2: AI lifecycle ownership is spread across multiple owners, with weak alignment between them

Finding 1 showed that the underlying processes and tools to manage the AI lifecycle are fragmented, and this finding explains why they’ll remain that way. You can redesign a workflow or adopt a new platform, but if nobody has the mandate or the infrastructure to connect the full lifecycle, the drag will persist.

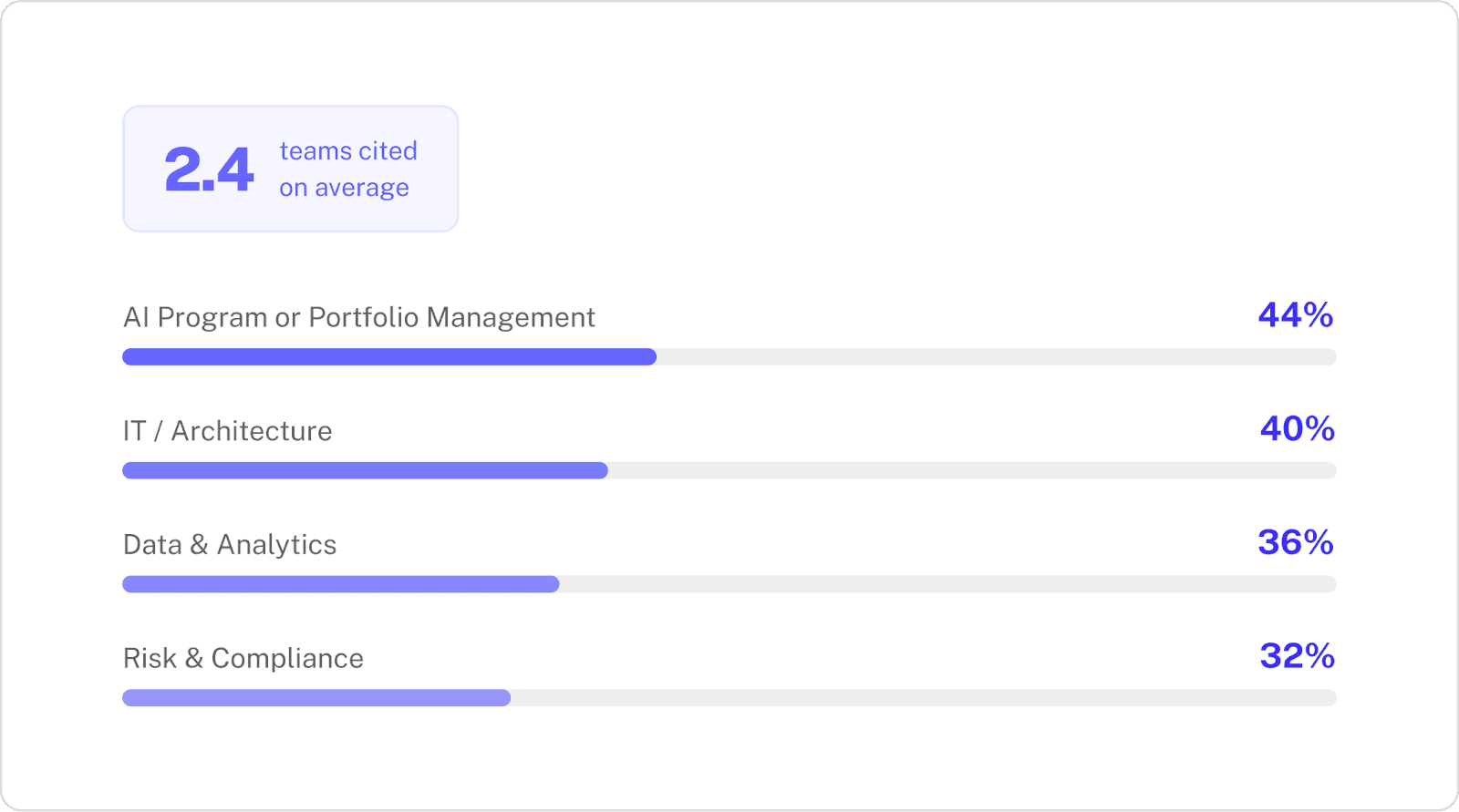

When we asked who owns the coordination of AI initiatives across the lifecycle, respondents didn't point to one team. On average, they cited 2.4 different owners. AI Program Management, IT, Data & Analytics, and Risk & Compliance were all named at similar rates. This shows multiple teams are responsible, but no single team truly owns the AI lifecycle end-to-end. That’s why handoffs fall through: there’s no prioritization logic and no clear owner for moving an AI initiative forward.

Who respondents say owns AI lifecycle coordination

This isn't shared ownership, it's diffused accountability.

If everyone owns the AI lifecycle, nobody owns it

If no one fully owns the connective tissue between teams and the end-to-end lifecycle, coordination will default to whoever is willing or simply charged with reconciling the data and results across teams and tools. This process will break as soon as your AI portfolio grows beyond a few initiatives, and doubles down on the overarching problem the survey revealed: AI programs are not equipped to run consistently or quickly.

Finding 3: Tool fragmentation and incomplete data readiness are other top problems

Findings 1 and 2 relate to cross-functional coordination problems that some teams still fail to perceive fully, but finding 3 gets into the operational specifics and day-to-day pain that are clearly felt by everyone who touches the AI lifecycle.

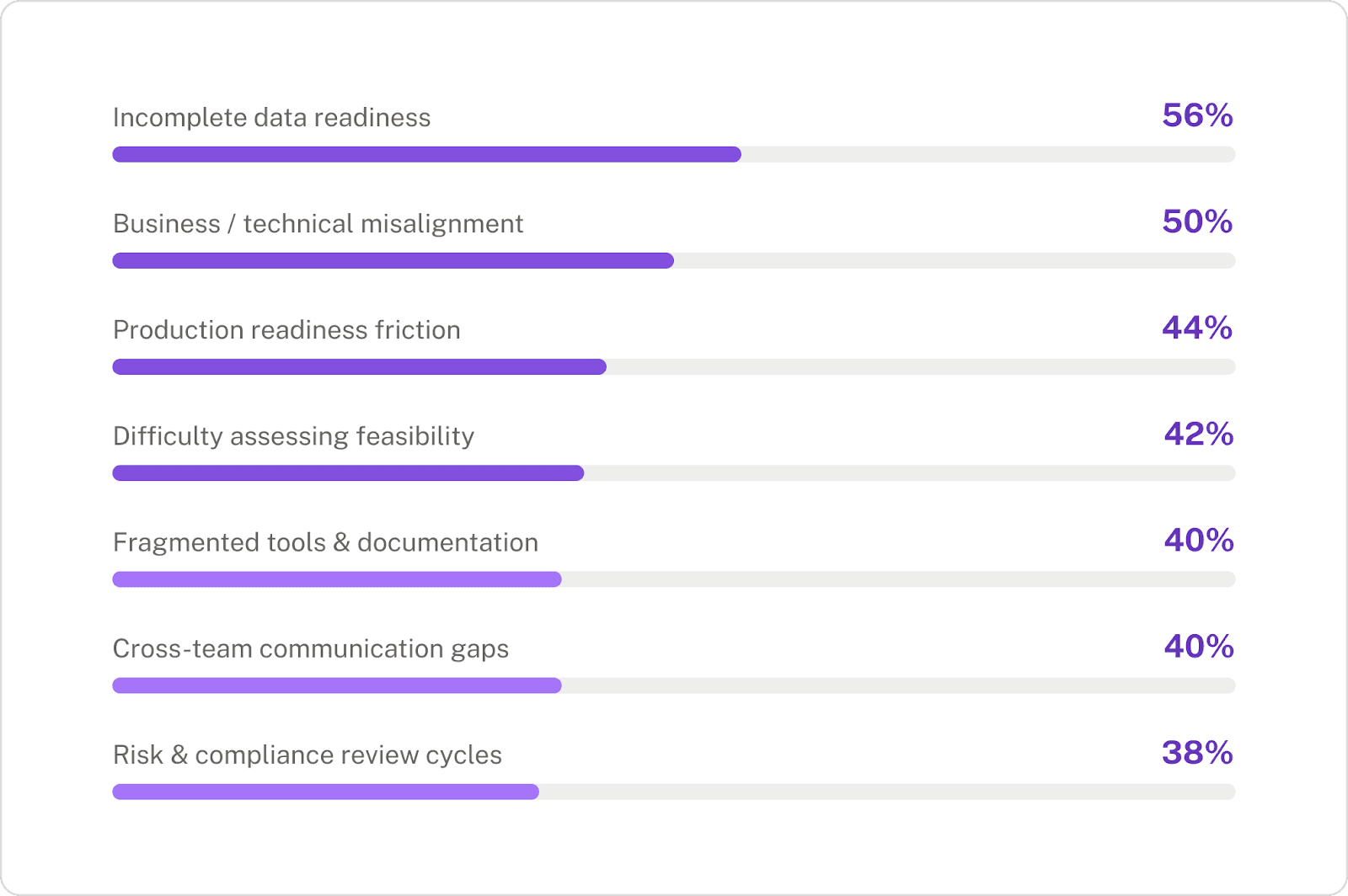

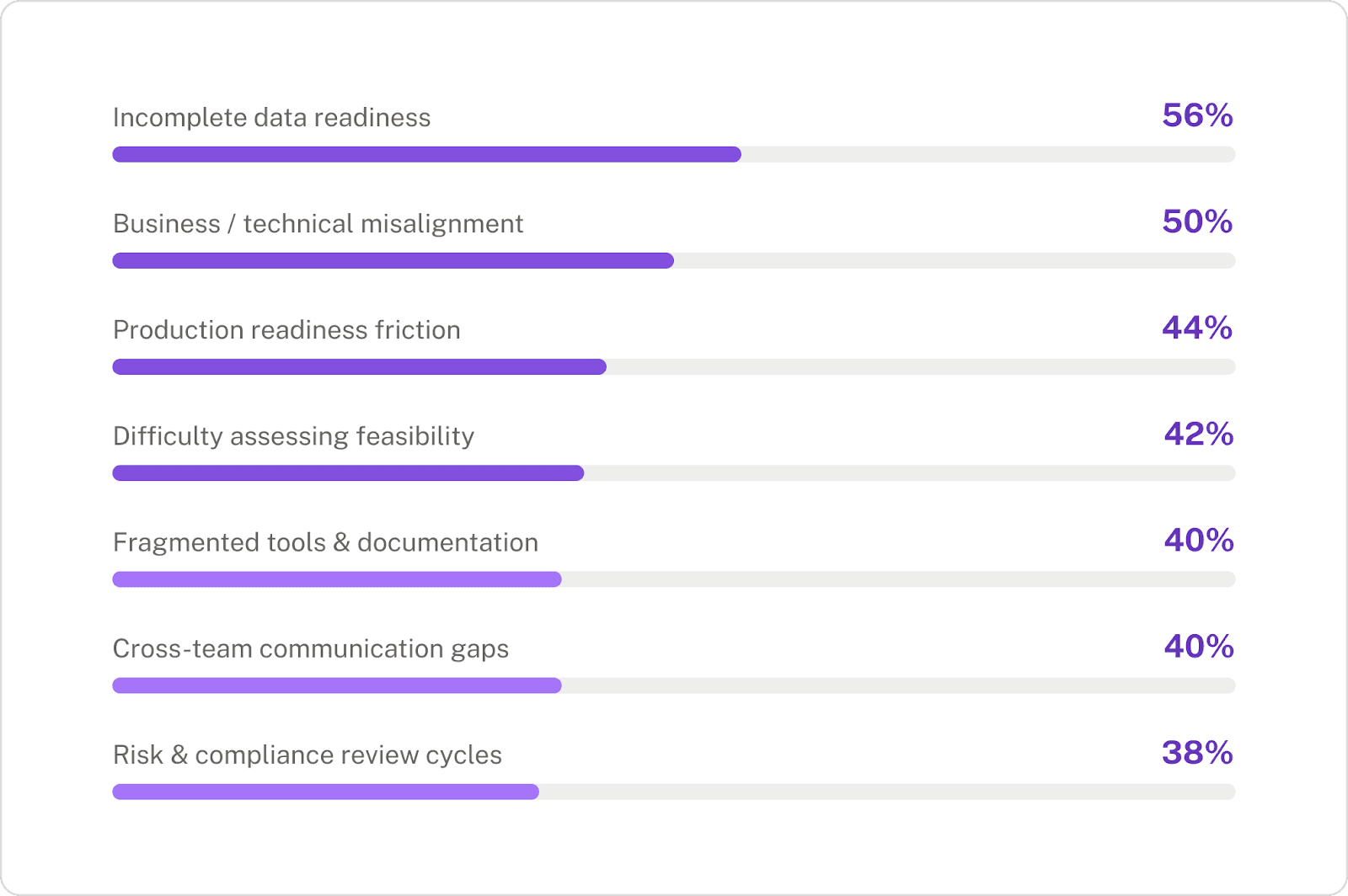

Top challenges slowing AI initiatives

What’s notable here is that there isn’t a single main bottleneck, but a general spread of them. The top five challenges span data, alignment, tooling, and governance. These compound across the lifecycle and double down on the ownership and collaboration problems.

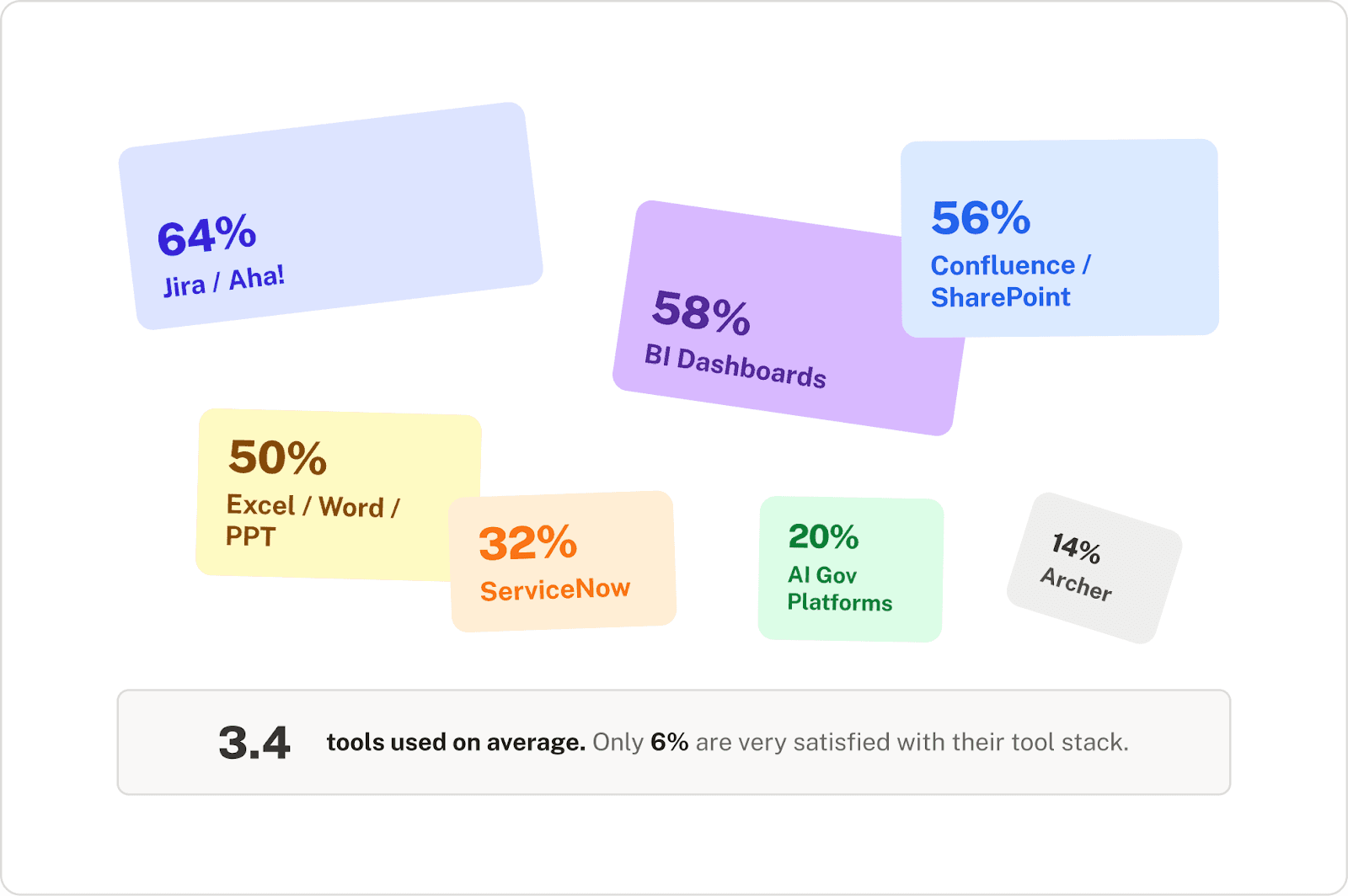

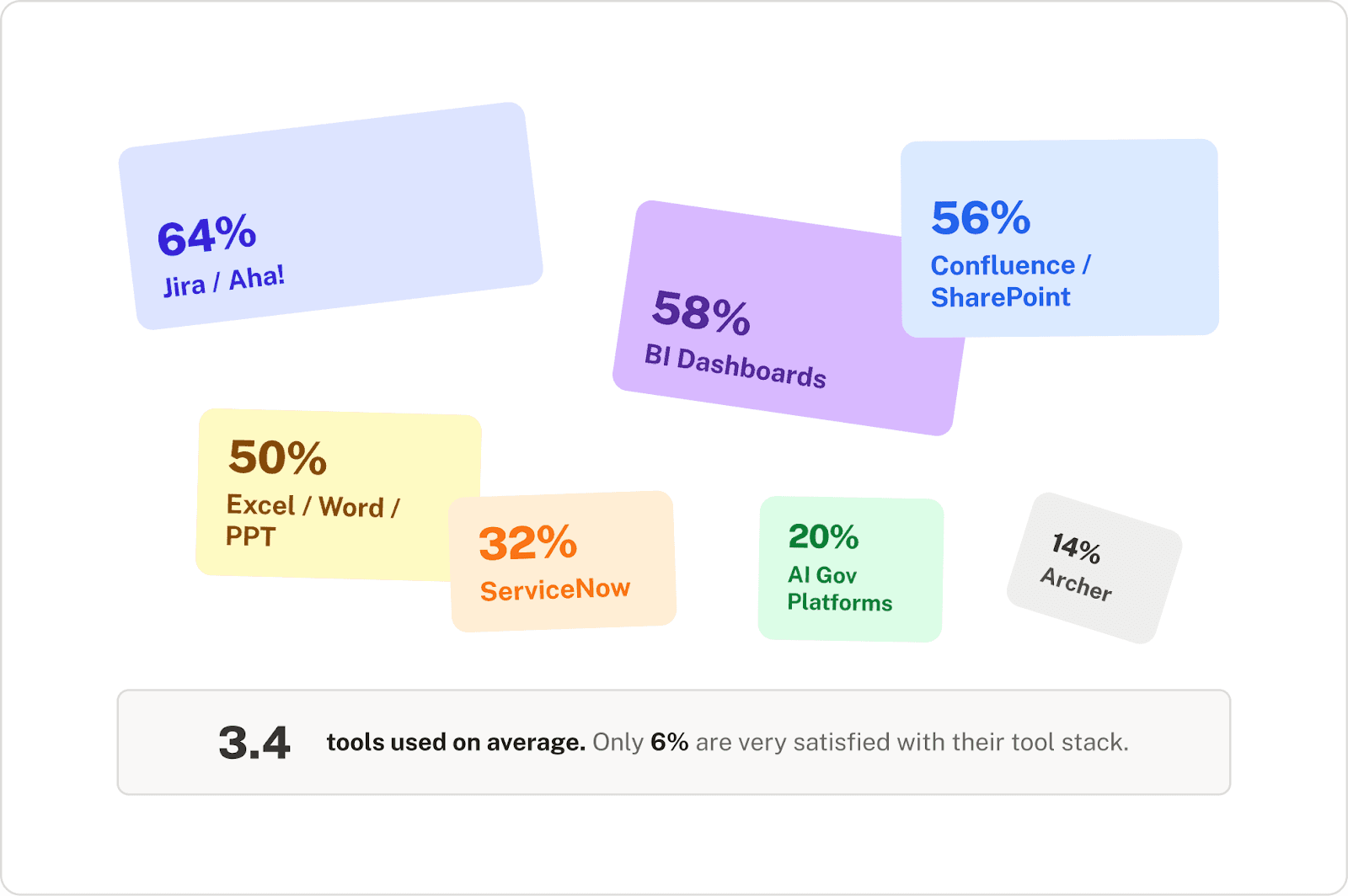

The AI lifecycle “tech stack” looks more like a patchwork

When we asked what teams actually use to manage the AI lifecycle today, no single tool dominated. Instead, teams reported a general spread across tools that were never designed to work together for AI lifecycle management. On average, respondents reported using 3.4 different tools.

A smattering of tools, and half of respondents relying on Excel is not a tech stack. It's a patchwork. Each disconnected tool is another opportunity for data and decision continuity to drop off. Bridging the gap between these tools could be done, but requires costly in-house builds that pull engineering resources away from other work.

Respondents' satisfaction with their current setup reinforces this. Only 6% say they're "very satisfied" with their tool stack for managing AI across the lifecycle. 28% are actively dissatisfied. And 74% describe themselves as only "somewhat satisfied" with their end-to-end workflow. Organizations tolerate their tools, rather than trust them.

The complexity grows as organizations source AI from different vendors

Adding to the complexity: 56% of respondents source AI from multiple vendors. This jumps to 71% for banking institutions with $100-250 billion in assets, and 100% for insurers at $5-10 billion in assets. They're not managing a single homegrown system, they're managing a portfolio of built and bought solutions across different providers, each with its own documentation, risk profile, and update cadence. Naturally, insurance respondents at the $5B–$10B tier explicitly named vendor sprawl as the main pain point, wanting "ideation to deployment and monitoring without the need for multiple vendors.”

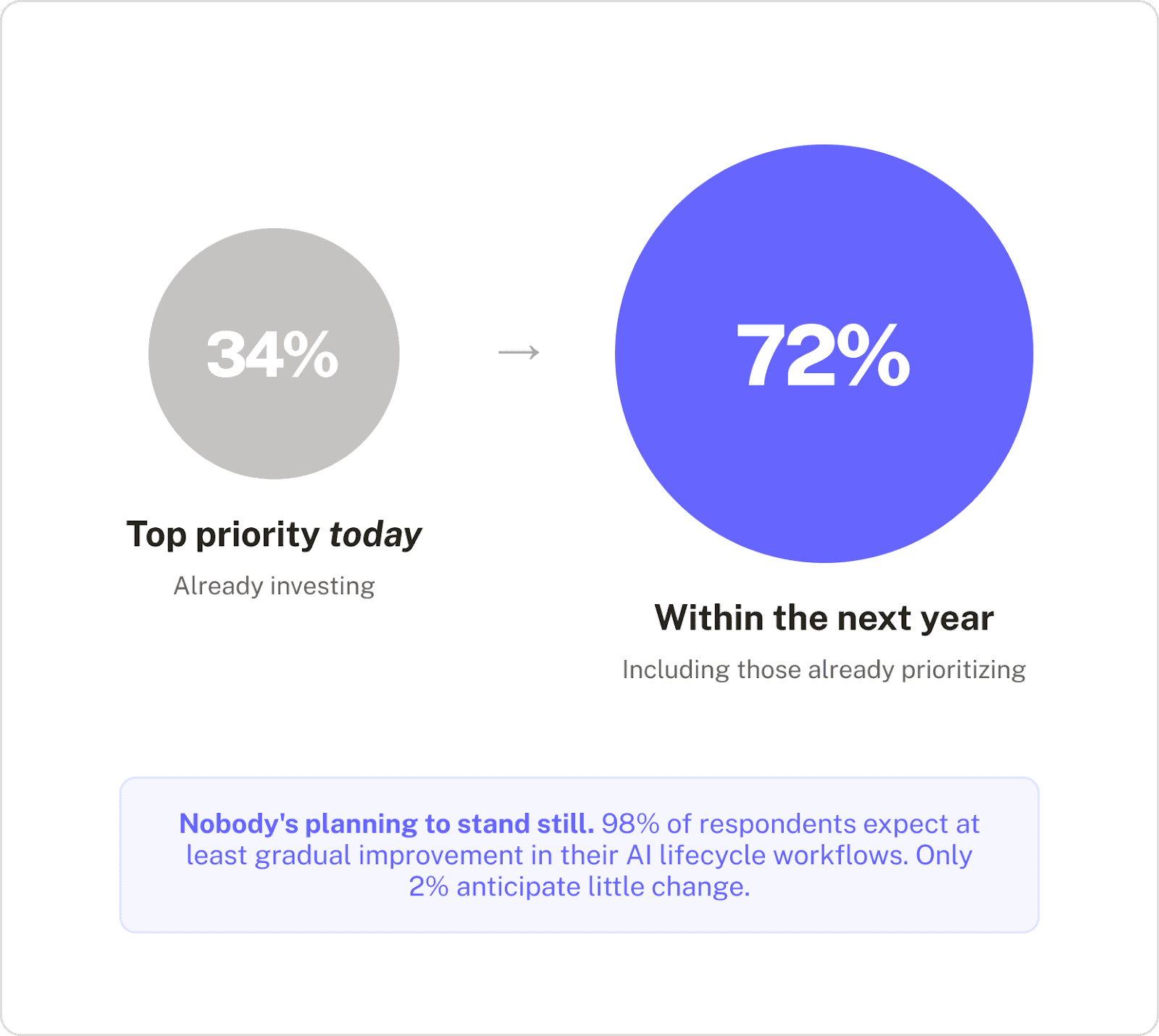

Finding 4: AI lifecycle management to be a top priority for most within the year

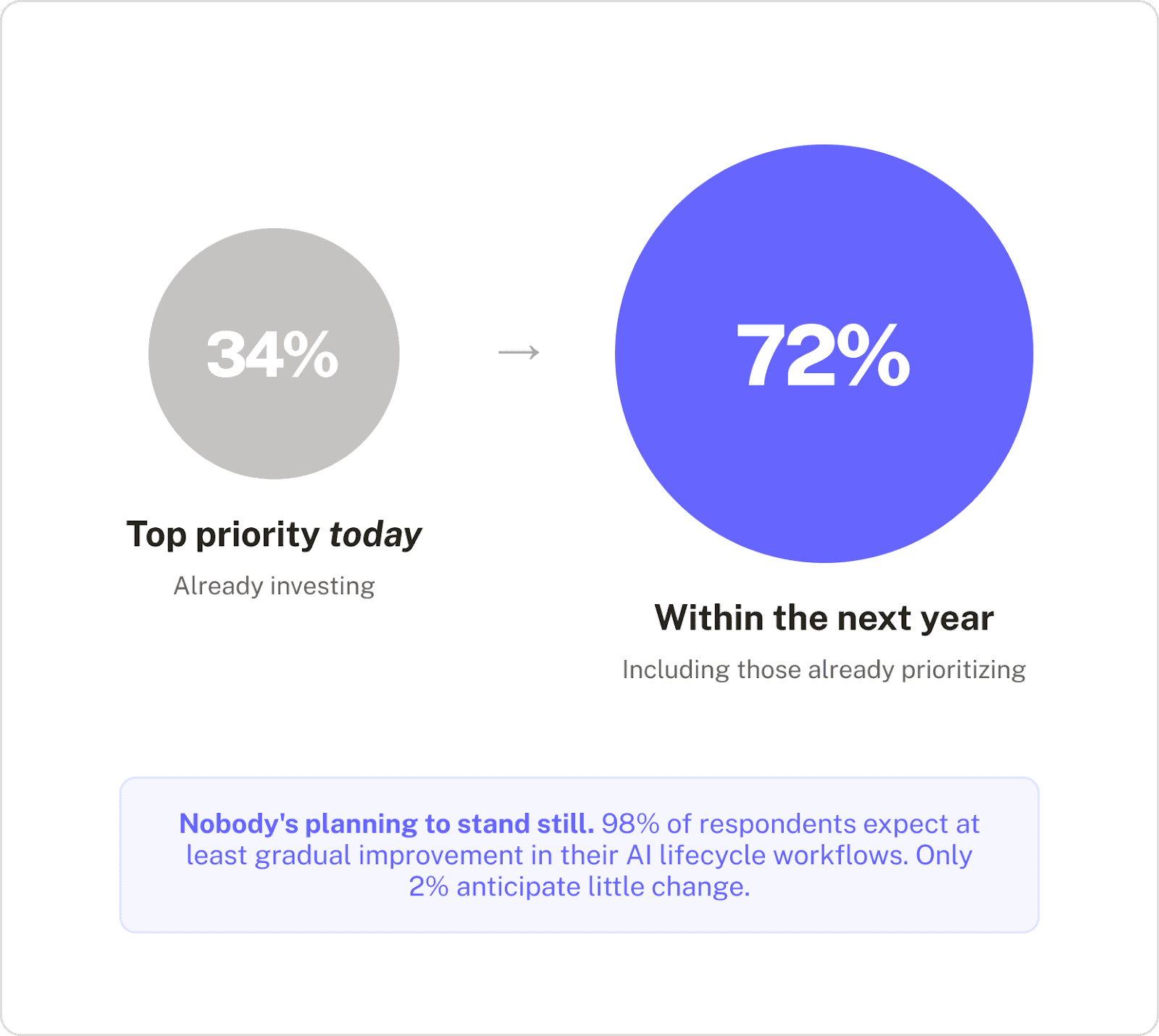

The first three findings describe structural problems. Finding 4 suggests that more and more organizations will move to do something about solving them in the coming year. And with increased urgency.

Urgency signals for AI lifecycle management

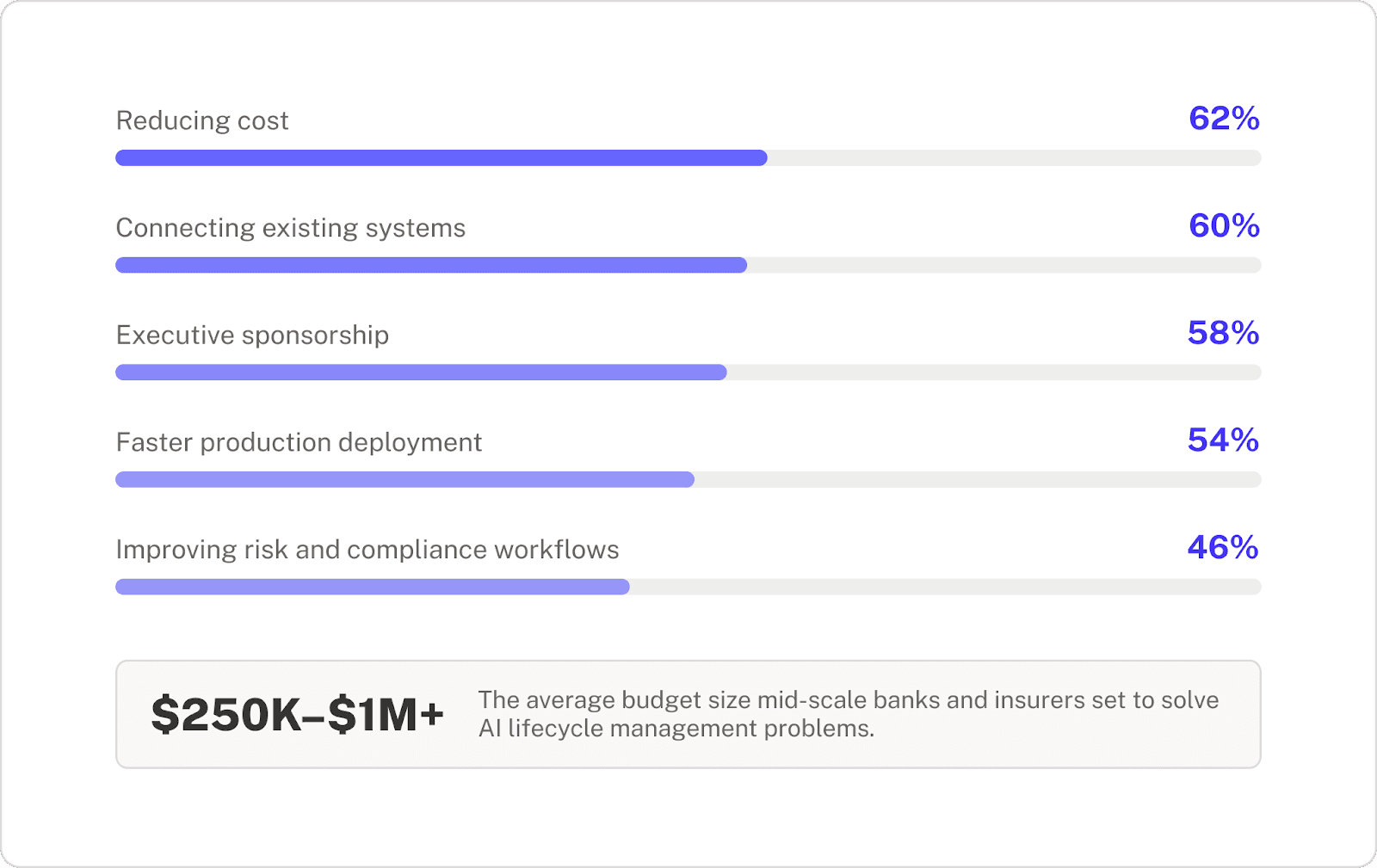

Budgets support the urgency

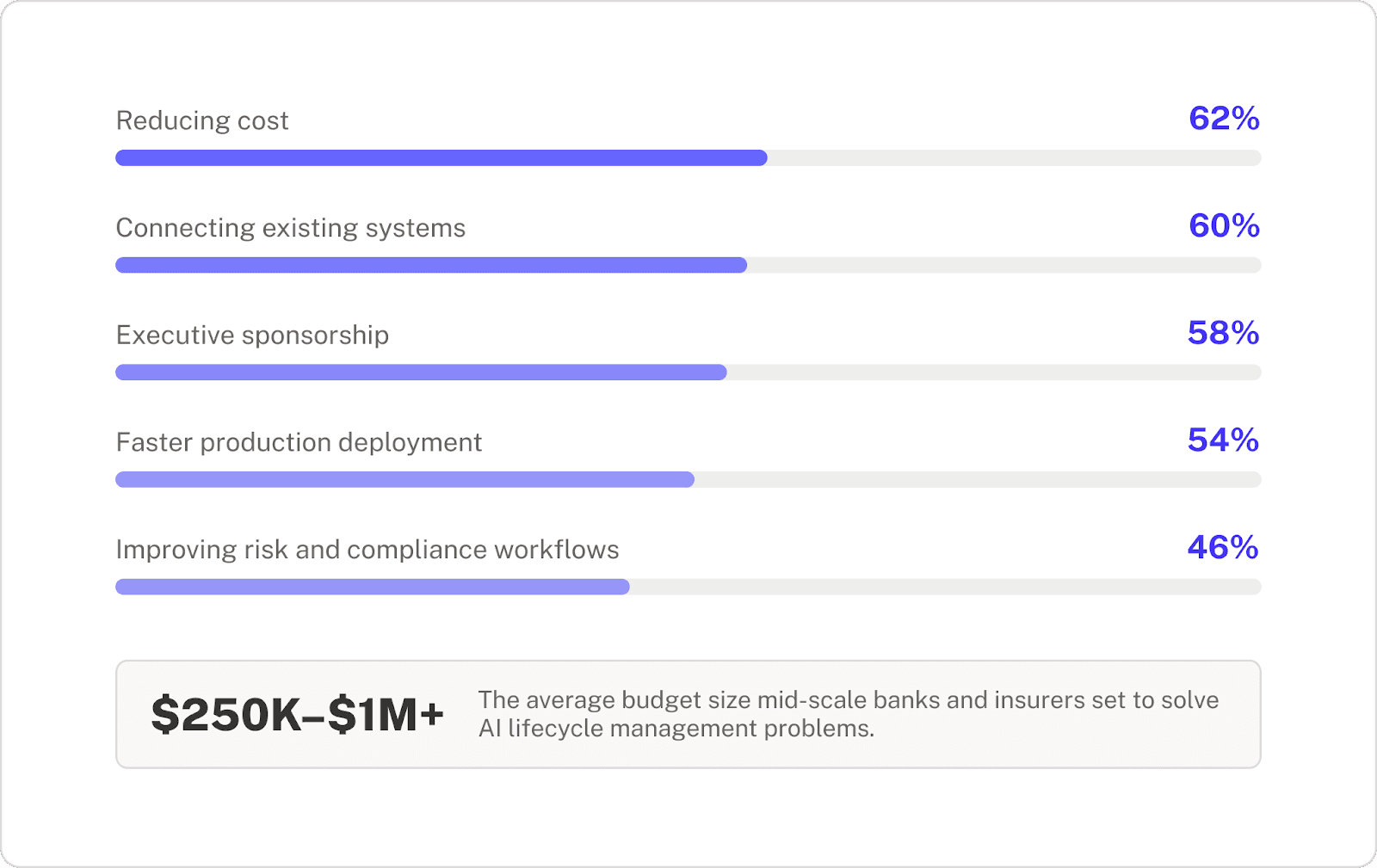

This push isn’t aspirational. Across mid-scale banks and insurers, budgets in the $250K–$1M+ range are actively allocated to solve AI lifecycle and program management challenges. Procurement decisions are already being made, and the top factors for adopting a tool are to reduce cost and connect existing systems. 94% also say they would be very or somewhat likely to evaluate or purchase a platform that reduces review cycles, meetings, and cross-team friction by 30–50%.

The window is narrowing

With many organizations setting their sights on AI lifecycle management as a top priority, piloting solutions, and allocating budget, the gap between organizations that figure this out and those that don't will widen. The cost of the status quo isn't just the coordination overhead. It's the compounding opportunity cost of every quarter your AI program can't prove its value or move its best initiatives to production.

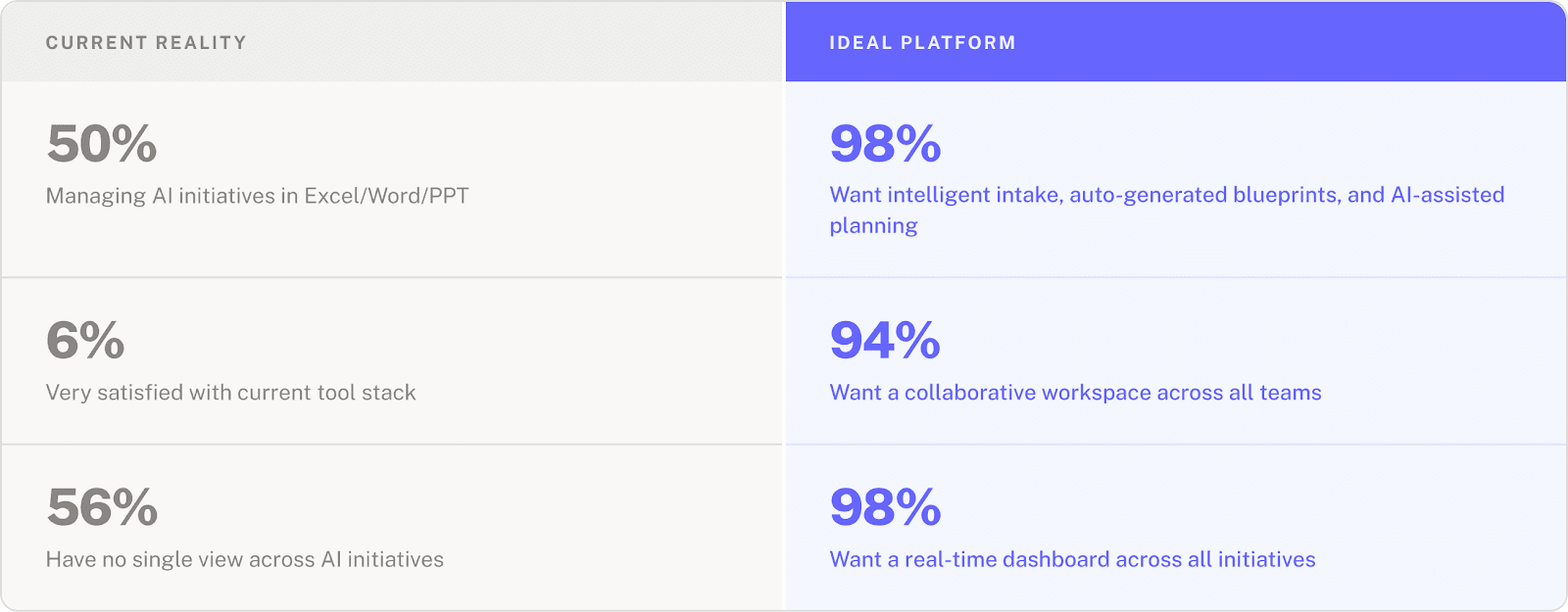

Finding 5: Leaders agree in what they want in a solution, but don’t have it yet

While the pain points point to an array of problems, one of the most striking results from the survey is how clearly respondents can articulate what they think the solution is.

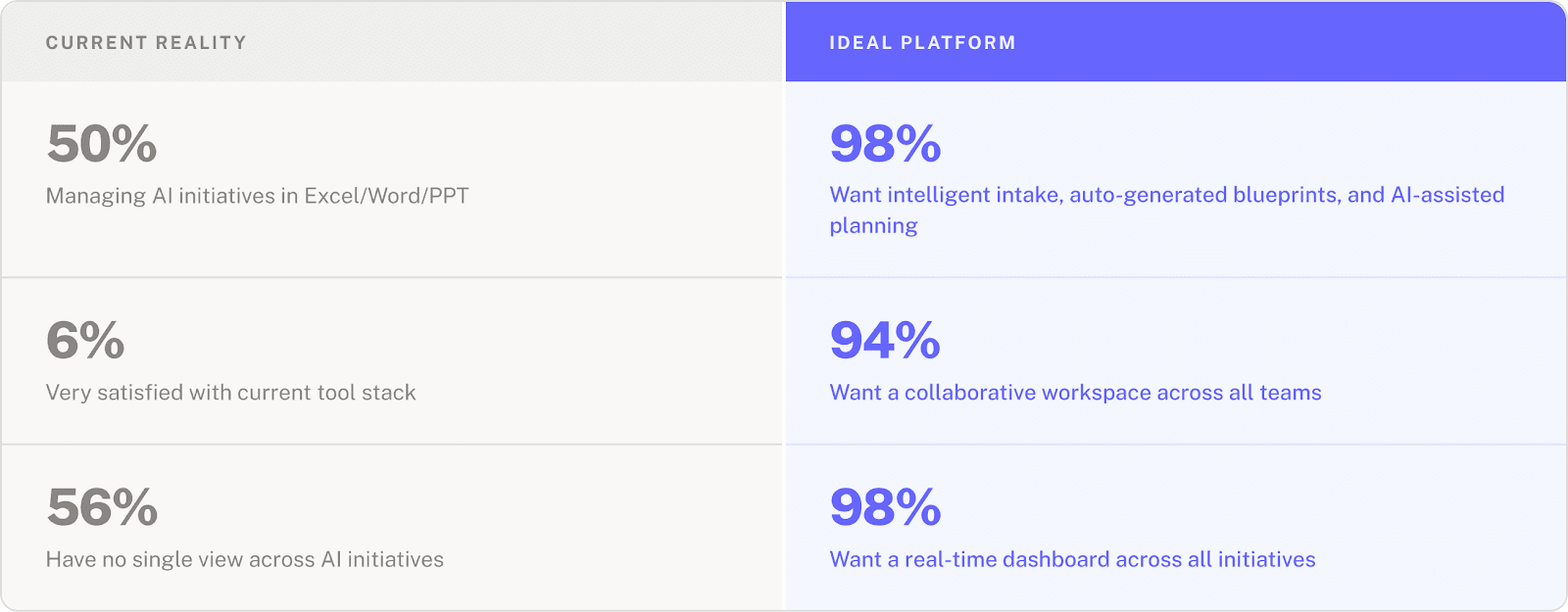

What an ideal AI lifecycle solution could include vs. what they have today

Program managers, CDOs, and governance leads don’t have different wishlists. They’ve all landed on the same set of capabilities: structured intake, automated documentation, real-time visibility, and lifecycle-connected monitoring.

The market isn't confused about what "good" looks like. It just doesn't have it yet

Nothing in the current patchwork of Jira, SharePoint, Excel, and BI dashboards was designed to deliver these capabilities end to end. And bolting on more point solutions only deepens the fragmentation that respondents are already telling us is a top challenge.

There’s broad tolerance for current tooling, but near-universal demand for better capabilities. That gap between knowing there’s a problem and doing something about it is where the real cost accumulates, and it only continues to widen as organizations grow their AI programs.

AI brings new challenges that go beyond this familiar enterprise problem

Cross-functional coordination and tool consolidation challenges aren't unique to AI. But AI introduces a distinct set of requirements that generic project management and GRC tools weren't built for:

Risk evaluation that reflects the complexity of AI systems.

Holistic feasibility assessments that include data evaluations and process changes, in addition to technical evaluations.

Governance that needs to be embedded into the workflow rather than layered on top.

Monitoring that ties reality to intent.

The specificity of what respondents described reflects this. They're not asking for a better project tracker, they're describing an operating layer purpose-built to manage AI from idea to production and beyond.

What these findings mean for AI program leaders

Takeaway 1: Visibility and maturity based on manual processes creates blind spots that compound over time

True maturity and visibility hinge on accessing real-time, traceable, and connected data at every stage—not someone manually assembling updates from static mediums, like Excel and Powerpoint.

The gap between perceived and actual operational maturity is where compliance risks, stalled initiatives, and unproven ROI hide.

Takeaway 2: When everyone owns the AI lifecycle, nobody does.

Multiple teams owning the lifecycle isn’t shared ownership, but diffused accountability.

Until someone (or something) is the single connective layer across those teams, coordination will be an obstacle.

Takeaway 3: The tool stack is tolerated, not trusted.

Nobody is satisfied with running AI lifecycle management through existing tools.

What teams use and what they actually need is only growing as AI programs mature.

Takeaway 4: AI lifecycle management will be top priority this year

72% of respondents expect AI lifecycle management to become a top priority within the next year, and budgets are already being allocated.

Organizations that move now will compound their advantage. Those that wait will face a growing gap between what their AI programs promise and what they can deliver.

Takeaway 5: Leaders agree on what a good solution looks like, but they don’t have it yet.

Almost all respondents independently describe wanting the same capabilities: intelligent intake, real-time portfolio visibility, automated blueprints.

It's that the current tool stack can't deliver it, and the organizational will to change hasn't caught up to the operational need.

Make these findings actionable for your organization

These results mirror conversations we have with teams implementing AI in regulated enterprises. We see so much appetite to implement and experiment with AI programs, but the coordination, tooling, and ownership model simply hasn’t caught up.

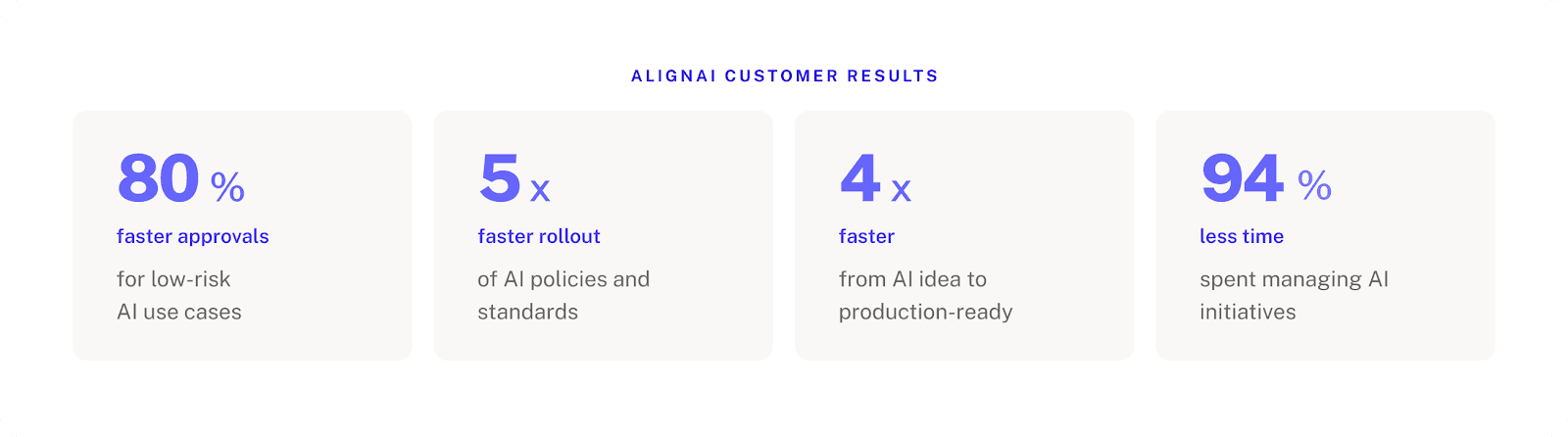

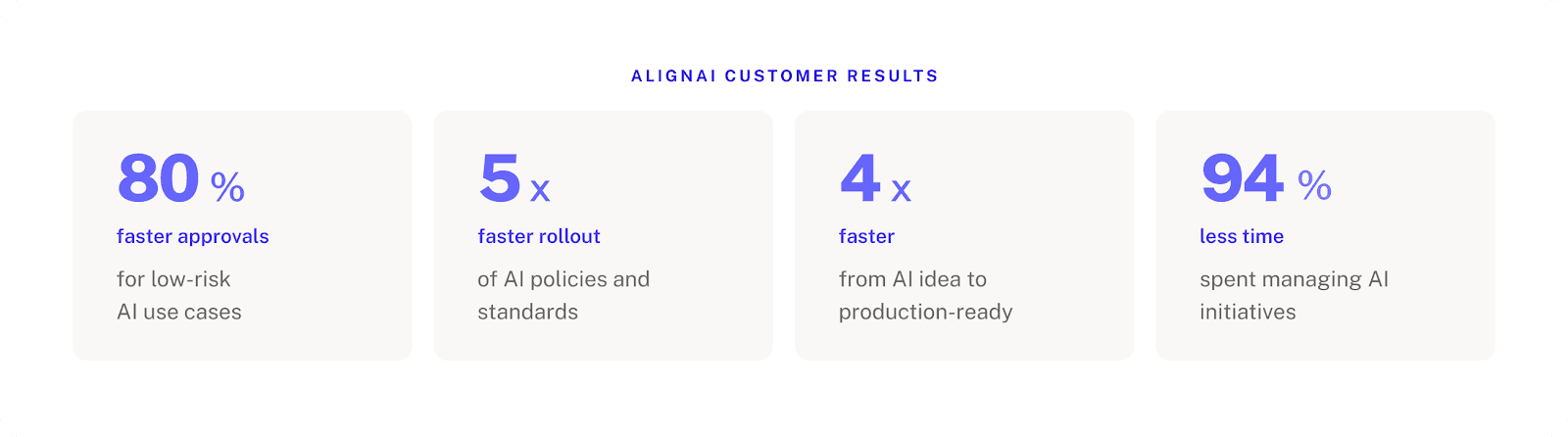

They also reinforce our conviction that the missing piece isn’t another point solution tossed in with the rest, but rather an operating model that connects the entire lifecycle and the people working across it, end-to-end. This is exactly what we built AlignAI to do. Our customers get results that directly address the hidden and all too real pain points this survey uncovers:

If the findings and challenges revealed in this report reflect your organization's reality, we'd welcome the conversation to see how AlignAI can help you address them.

Financial services enterprises are accelerating their forays into AI. Budgets to do so are up, ambitions are high, and the pressure to show ROI has never been greater. Yet despite years of investment and experience, AI initiatives still aren’t reaching production or seeing value fast enough.

NVIDIA's 2026 State of AI report shows 86% of financial services organizations plan to increase their AI budgets this year. Deloitte's latest research puts 54% of organizations in all industries on track to double AI projects in production in the next six months. Our own survey data also reinforces that even 98% agree on what the ideal AI lifecycle management solution should look like.

So why are these same organizations still running their AI programs through Excel and not hitting their AI production timelines and goals?

These are the questions we set out to answer. That’s why we partnered with the Gerson Lehrman Group (GLG) to survey senior AI leaders across the insurance and banking and financial service industries. These are the people designing, coordinating, and governing enterprise AI programs.

The data revealed a clear operations gap. The biggest barriers to deploying AI stem from cross-functional coordination issues, no end-to-end ownership, and tool fragmentation across tools not built for the AI lifecycle. Here’s what stood out:

Below, we break down key findings from the survey, and what they mean for AI program leads trying to navigate them.

Who we surveyed

*”Other senior AI leader” roles include: VP in Pilot Programs, SVP of Research & Strategy, Board Member, Principal Architect-AI and Data, IT Examination Manager, AI Development for Claims

Years of experience implementing AI programs

Finding 1: 52% of leaders say they have full lifecycle visibility, yet 56% say they lack a single view across initiatives

On the surface, the survey results look encouraging. More than half of respondents (52%) say they have "full visibility" across all stages of the AI lifecycle, and 76% rate their operations as moderately or highly mature. Most have formalized at least some lifecycle workflows, with 72% having a structured intake and backlog process, and over half have formalized prioritization, risk evaluations, and design-phase workflows.

But when we asked about specific operational capabilities, the picture shifted.

What respondents reported about their tooling and coordination gaps

These aren't different groups. These are the same people reporting that they have both full visibility and maturity, but are struggling with the ground-level coordination and integration for these same systems.

“Visibility” for leaders might be predicated on more invisible manual forces

The problem might lie in “visibility” working differently between executives asking for updates, versus the AI program managers trying to pull them together. For many, this means pulling data and details from Jira, Excel spreadsheets, SharePoint docs, and more. At AlignAI, we tend to see AI programs move through a fragmented process, with no connected system that sits on top of it all. So what a leader may see as a mature operation is actually based on someone else cobbling it all together.

This can be problematic if those with the authority to fix the problem are precisely not the ones experiencing it. Eventually, manual reporting won’t be able to explain what’s stalled and why, where a compliance gap is, or what an AI program’s true value is.

This pattern holds across industries. Both banking and insurance respondents exhibited this perception gap. Insurance respondents were actually more explicit about the operational reality, naming end-to-end coordination gaps and post-production monitoring needs unprompted, even for smaller organizations ($1B–$5B).

Visibility must be systemic, not manually produced

The question isn't whether your organization has visibility. It's whether that visibility is systemic —real-time, traceable, and connected across every stage of the lifecycle—or whether it's a person chasing information on behalf of someone who asked.

Finding 2: AI lifecycle ownership is spread across multiple owners, with weak alignment between them

Finding 1 showed that the underlying processes and tools to manage the AI lifecycle are fragmented, and this finding explains why they’ll remain that way. You can redesign a workflow or adopt a new platform, but if nobody has the mandate or the infrastructure to connect the full lifecycle, the drag will persist.

When we asked who owns the coordination of AI initiatives across the lifecycle, respondents didn't point to one team. On average, they cited 2.4 different owners. AI Program Management, IT, Data & Analytics, and Risk & Compliance were all named at similar rates. This shows multiple teams are responsible, but no single team truly owns the AI lifecycle end-to-end. That’s why handoffs fall through: there’s no prioritization logic and no clear owner for moving an AI initiative forward.

Who respondents say owns AI lifecycle coordination

This isn't shared ownership, it's diffused accountability.

If everyone owns the AI lifecycle, nobody owns it

If no one fully owns the connective tissue between teams and the end-to-end lifecycle, coordination will default to whoever is willing or simply charged with reconciling the data and results across teams and tools. This process will break as soon as your AI portfolio grows beyond a few initiatives, and doubles down on the overarching problem the survey revealed: AI programs are not equipped to run consistently or quickly.

Finding 3: Tool fragmentation and incomplete data readiness are other top problems

Findings 1 and 2 relate to cross-functional coordination problems that some teams still fail to perceive fully, but finding 3 gets into the operational specifics and day-to-day pain that are clearly felt by everyone who touches the AI lifecycle.

Top challenges slowing AI initiatives

What’s notable here is that there isn’t a single main bottleneck, but a general spread of them. The top five challenges span data, alignment, tooling, and governance. These compound across the lifecycle and double down on the ownership and collaboration problems.

The AI lifecycle “tech stack” looks more like a patchwork

When we asked what teams actually use to manage the AI lifecycle today, no single tool dominated. Instead, teams reported a general spread across tools that were never designed to work together for AI lifecycle management. On average, respondents reported using 3.4 different tools.

A smattering of tools, and half of respondents relying on Excel is not a tech stack. It's a patchwork. Each disconnected tool is another opportunity for data and decision continuity to drop off. Bridging the gap between these tools could be done, but requires costly in-house builds that pull engineering resources away from other work.

Respondents' satisfaction with their current setup reinforces this. Only 6% say they're "very satisfied" with their tool stack for managing AI across the lifecycle. 28% are actively dissatisfied. And 74% describe themselves as only "somewhat satisfied" with their end-to-end workflow. Organizations tolerate their tools, rather than trust them.

The complexity grows as organizations source AI from different vendors

Adding to the complexity: 56% of respondents source AI from multiple vendors. This jumps to 71% for banking institutions with $100-250 billion in assets, and 100% for insurers at $5-10 billion in assets. They're not managing a single homegrown system, they're managing a portfolio of built and bought solutions across different providers, each with its own documentation, risk profile, and update cadence. Naturally, insurance respondents at the $5B–$10B tier explicitly named vendor sprawl as the main pain point, wanting "ideation to deployment and monitoring without the need for multiple vendors.”

Finding 4: AI lifecycle management to be a top priority for most within the year

The first three findings describe structural problems. Finding 4 suggests that more and more organizations will move to do something about solving them in the coming year. And with increased urgency.

Urgency signals for AI lifecycle management

Budgets support the urgency

This push isn’t aspirational. Across mid-scale banks and insurers, budgets in the $250K–$1M+ range are actively allocated to solve AI lifecycle and program management challenges. Procurement decisions are already being made, and the top factors for adopting a tool are to reduce cost and connect existing systems. 94% also say they would be very or somewhat likely to evaluate or purchase a platform that reduces review cycles, meetings, and cross-team friction by 30–50%.

The window is narrowing

With many organizations setting their sights on AI lifecycle management as a top priority, piloting solutions, and allocating budget, the gap between organizations that figure this out and those that don't will widen. The cost of the status quo isn't just the coordination overhead. It's the compounding opportunity cost of every quarter your AI program can't prove its value or move its best initiatives to production.

Finding 5: Leaders agree in what they want in a solution, but don’t have it yet

While the pain points point to an array of problems, one of the most striking results from the survey is how clearly respondents can articulate what they think the solution is.

What an ideal AI lifecycle solution could include vs. what they have today

Program managers, CDOs, and governance leads don’t have different wishlists. They’ve all landed on the same set of capabilities: structured intake, automated documentation, real-time visibility, and lifecycle-connected monitoring.

The market isn't confused about what "good" looks like. It just doesn't have it yet

Nothing in the current patchwork of Jira, SharePoint, Excel, and BI dashboards was designed to deliver these capabilities end to end. And bolting on more point solutions only deepens the fragmentation that respondents are already telling us is a top challenge.

There’s broad tolerance for current tooling, but near-universal demand for better capabilities. That gap between knowing there’s a problem and doing something about it is where the real cost accumulates, and it only continues to widen as organizations grow their AI programs.

AI brings new challenges that go beyond this familiar enterprise problem

Cross-functional coordination and tool consolidation challenges aren't unique to AI. But AI introduces a distinct set of requirements that generic project management and GRC tools weren't built for:

Risk evaluation that reflects the complexity of AI systems.

Holistic feasibility assessments that include data evaluations and process changes, in addition to technical evaluations.

Governance that needs to be embedded into the workflow rather than layered on top.

Monitoring that ties reality to intent.

The specificity of what respondents described reflects this. They're not asking for a better project tracker, they're describing an operating layer purpose-built to manage AI from idea to production and beyond.

What these findings mean for AI program leaders

Takeaway 1: Visibility and maturity based on manual processes creates blind spots that compound over time

True maturity and visibility hinge on accessing real-time, traceable, and connected data at every stage—not someone manually assembling updates from static mediums, like Excel and Powerpoint.

The gap between perceived and actual operational maturity is where compliance risks, stalled initiatives, and unproven ROI hide.

Takeaway 2: When everyone owns the AI lifecycle, nobody does.

Multiple teams owning the lifecycle isn’t shared ownership, but diffused accountability.

Until someone (or something) is the single connective layer across those teams, coordination will be an obstacle.

Takeaway 3: The tool stack is tolerated, not trusted.

Nobody is satisfied with running AI lifecycle management through existing tools.

What teams use and what they actually need is only growing as AI programs mature.

Takeaway 4: AI lifecycle management will be top priority this year

72% of respondents expect AI lifecycle management to become a top priority within the next year, and budgets are already being allocated.

Organizations that move now will compound their advantage. Those that wait will face a growing gap between what their AI programs promise and what they can deliver.

Takeaway 5: Leaders agree on what a good solution looks like, but they don’t have it yet.

Almost all respondents independently describe wanting the same capabilities: intelligent intake, real-time portfolio visibility, automated blueprints.

It's that the current tool stack can't deliver it, and the organizational will to change hasn't caught up to the operational need.

Make these findings actionable for your organization

These results mirror conversations we have with teams implementing AI in regulated enterprises. We see so much appetite to implement and experiment with AI programs, but the coordination, tooling, and ownership model simply hasn’t caught up.

They also reinforce our conviction that the missing piece isn’t another point solution tossed in with the rest, but rather an operating model that connects the entire lifecycle and the people working across it, end-to-end. This is exactly what we built AlignAI to do. Our customers get results that directly address the hidden and all too real pain points this survey uncovers:

If the findings and challenges revealed in this report reflect your organization's reality, we'd welcome the conversation to see how AlignAI can help you address them.